Entropy definition chemistry8/24/2023 The law and the formula essentially state that the universe naturally prefers greater levels of entropy and allows any process which causes a positive increase, but prohibits any process which causes a negative increase. A spontaneous process is one which happens naturally without the need of outside energy or work to help it along. So W is measuring how many energetically equivalent microstates a system can organize its energy into to get the same macrostate (or the system as we can observe it on a human scale).Īnother formula uses the second law of thermodynamics: ΔSuniv > 0, which put into words states that any spontaneous process increases the entropy of the universe (creates a positive change). A microstate is a particular way to order energy.

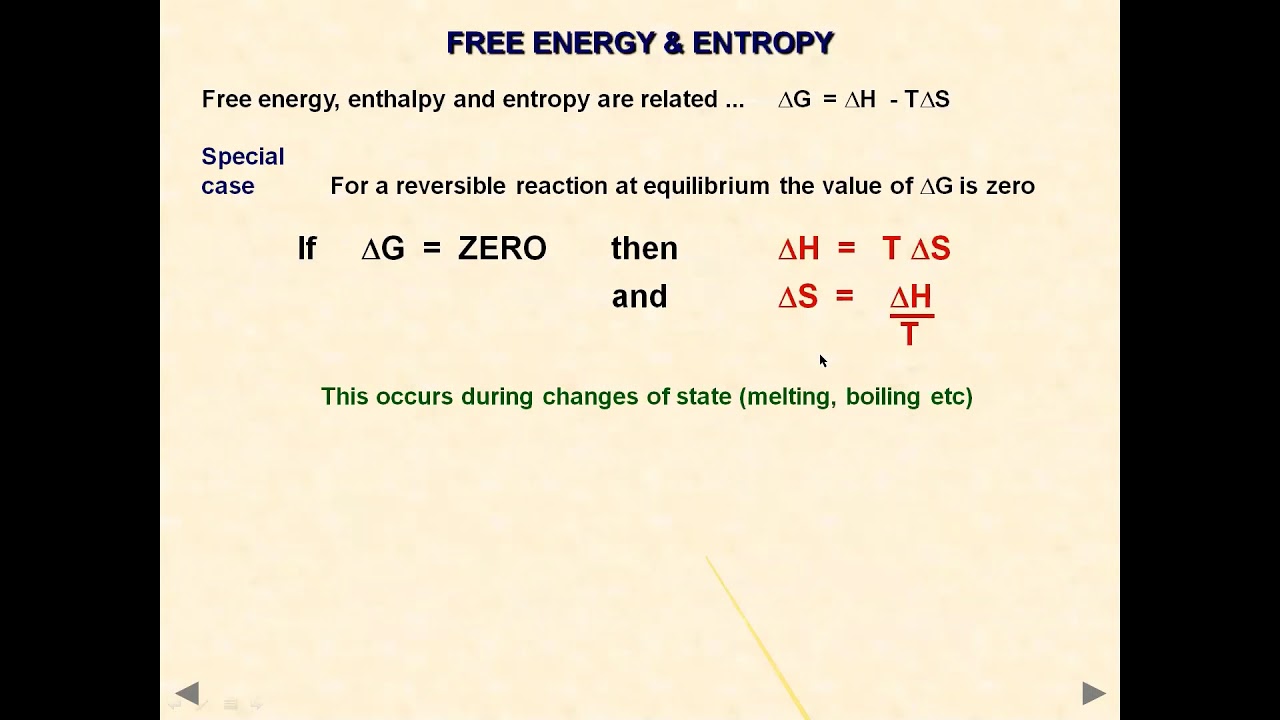

Boltzmann’s formula uses a more mathematical approach to entropy by using W. The unit for entropy is the same as Boltzmann’s constant which means that entropy can also be understood as how many energy can be dispersed at a certain temperature since entropy is temperature dependent. One equation is Boltzmann’s equation: S = k*ln(W), where S is entropy (the usual variable for entropy), k is Boltzmann’s constant which is equal to the gas constant divided by Avogadro’s number which is approximately equal to 1.38 x 10^(-23) J/K, and W is the number of microstates which is a unitless quantity. There are several because entropy can be explained and used in a variety of ways.

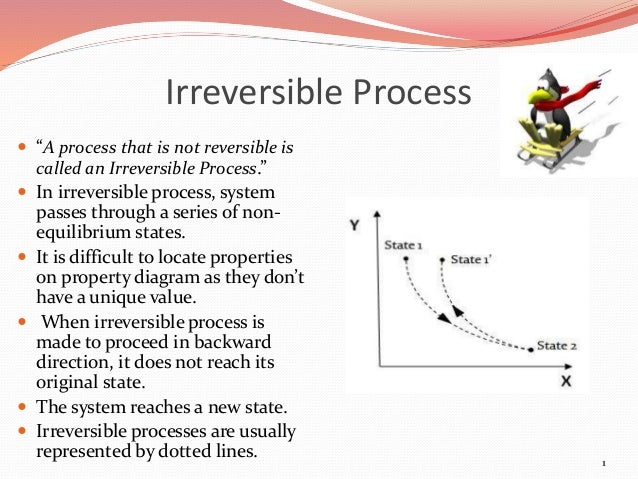

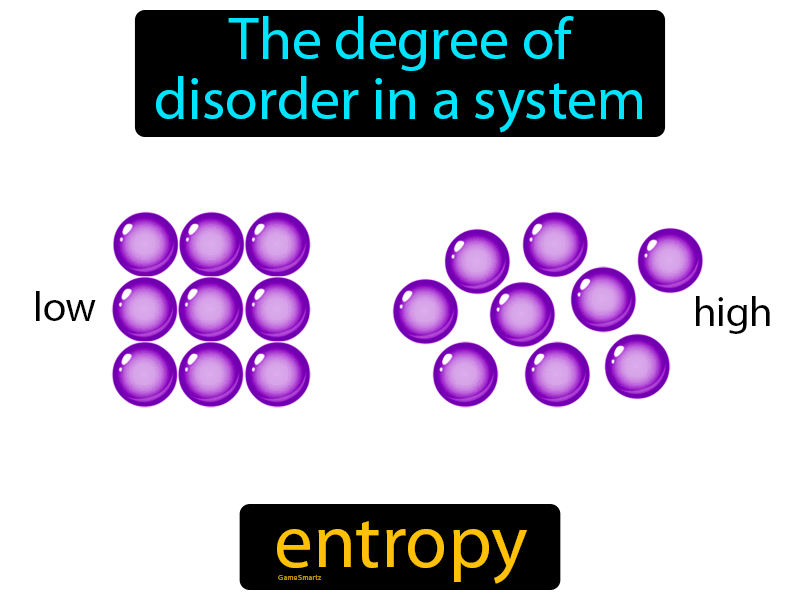

Since entropy is primarily dealing with energy, it’s intrinsically a thermodynamic property (there isn’t a non-thermodynamic entropy).Īs far as a formula for entropy, well there isn’t just one. There might be decreases in freedom in the rest of the universe, but the sum of the increase and decrease must result in a net increase.First it’s helpful to properly define entropy, which is a measurement of how dispersed matter and energy are in a certain region at a particular temperature. The freedom in that part of the universe may increase with no change in the freedom of the rest of the universe. Statistical Entropy - Mass, Energy, and Freedom The energy or the mass of a part of the universe may increase or decrease, but only if there is a corresponding decrease or increase somewhere else in the universe.Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other thermodynamic quantities. Statistical Entropy Entropy is a state function that is often erroneously referred to as the 'state of disorder' of a system.Phase Change, gas expansions, dilution, colligative properties and osmosis. Simple Entropy Changes - Examples Several Examples are given to demonstrate how the statistical definition of entropy and the 2nd law can be applied.

A microstate is one of the huge number of different accessible arrangements of the molecules' motional energy* for a particular macrostate. Instead, they are two very different ways of looking at a system. Microstates Dictionaries define “macro” as large and “micro” as very small but a macrostate and a microstate in thermodynamics aren't just definitions of big and little sizes of chemical systems.“Disorder” was the consequence, to Boltzmann, of an initial “order” not - as is obvious today - of what can only be called a “prior, lesser but still humanly-unimaginable, large number of accessible microstate it was his surprisingly simplistic conclusion: if the final state is random, the initial system must have been the opposite, i.e., ordered. ‘Disorder’ in Thermodynamic Entropy Boltzmann’s sense of “increased randomness” as a criterion of the final equilibrium state for a system compared to initial conditions was not wrong.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed